The GPU Memory Wall in LLM Serving

Why GPU memory is the bottleneck, and what the GH200 changes.

Part 1 of 3 from my Master's thesis at ETH Zurich.

April 11, 2026

Introduction

The widespread adoption of large language models has placed increasing demands on GPU memory, which must hold both model parameters and the key-value (KV) cache used during autoregressive generation. Because the KV cache grows linearly with sequence length and batch size, it competes directly with model parameters for limited high-bandwidth memory (HBM), making GPU memory the primary bottleneck for serving throughput

A natural strategy is to extend available memory by offloading model parameters to CPU DRAM. Prior work

The NVIDIA GH200 Grace Hopper Superchip fundamentally changes this tradeoff. With up to 900 GB/s bidirectional CPU–GPU bandwidth over NVLink-C2C — roughly 7× that of PCIe Gen5 — the GH200 transforms offloading from a last resort into a viable strategy for actively improving serving throughput. Recent systems

This is the first post in a three-part series based on my Master’s thesis at ETH Zurich. In this post, I provide the necessary background on LLM serving and the GH200 architecture, and survey the existing offloading landscape. In Part 2, I will characterize vLLM’s behavior on the GH200 and identify the design requirements for an effective offloading system. In Part 3, I will present our dynamic parameter offloading system that achieves 10–22% throughput improvements over default vLLM while maintaining full compatibility with CUDA graphs and prefix caching.

LLM Architecture

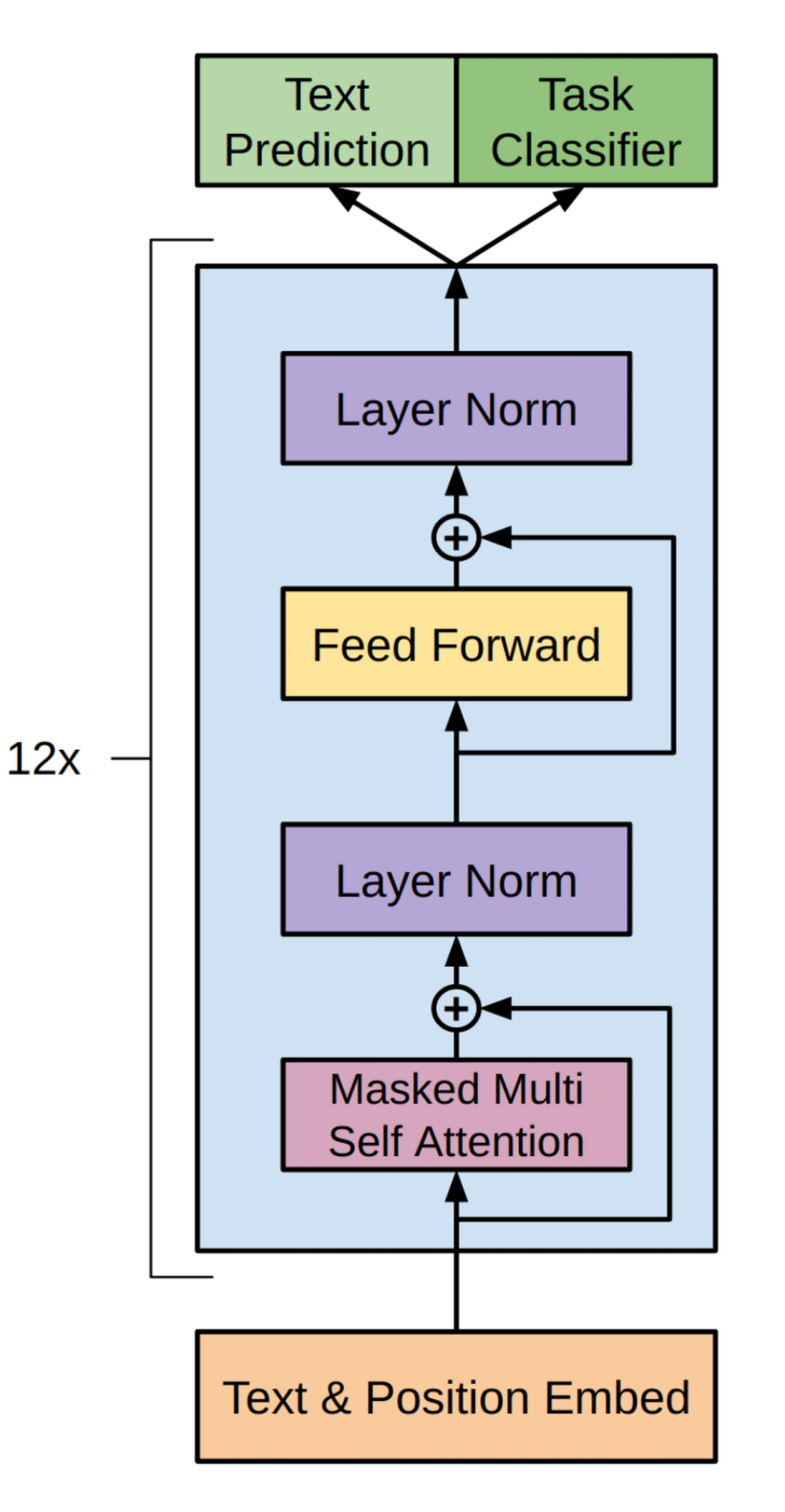

Modern large language models are built on the transformer architecture, originally introduced for machine translation

Decoder-Only Transformers

Decoder-only transformers

The core operation in each transformer block is masked multi-head self-attention, which computes pairwise relationships between all input token embeddings, producing an $n \times n$ attention matrix for a sequence of $n$ tokens. A causal mask restricts each token to attend only to itself and preceding tokens, preventing the model from accessing future positions during generation. This masking enables autoregressive generation, where each new token is predicted based solely on preceding tokens. From a computational perspective, the quadratic complexity of attention in sequence length significantly increases both memory consumption and compute cost, particularly for long contexts.

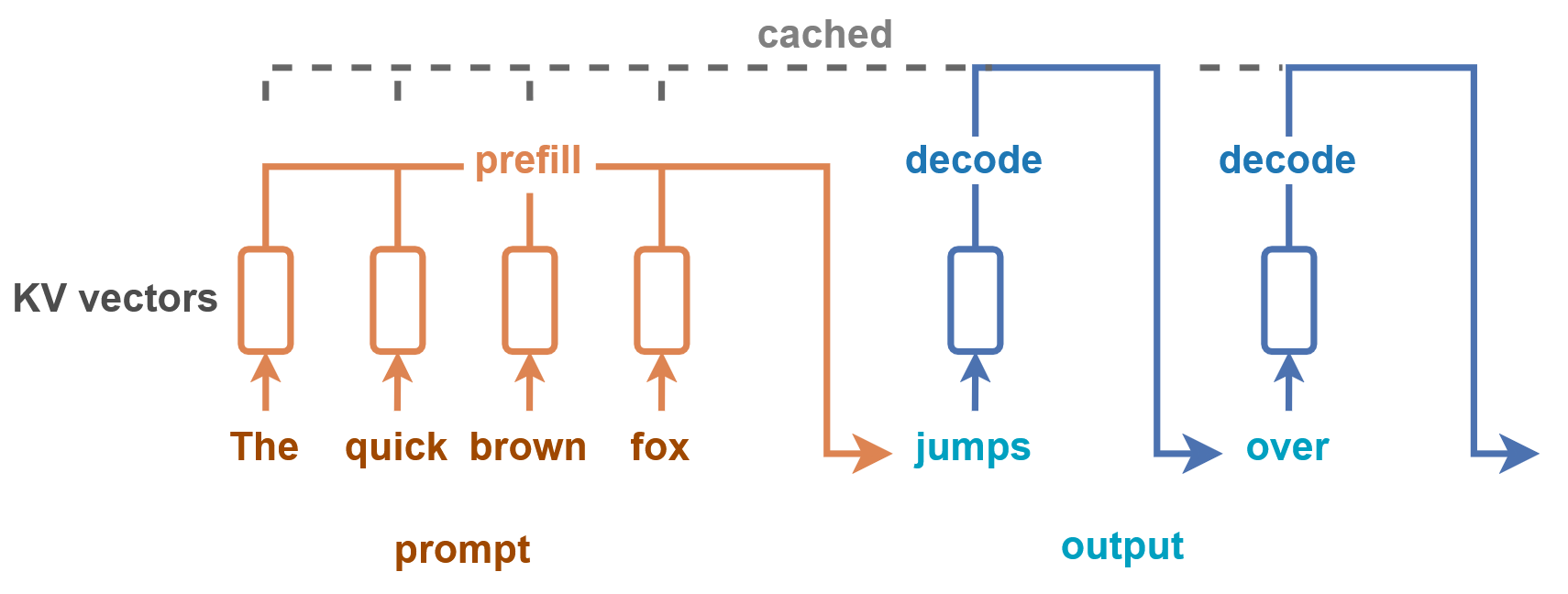

Key-Value Caching

Naive autoregressive generation would recompute attention over all preceding tokens at each decode step, leading to substantial redundant computation. Key-value (KV) caching eliminates this redundancy by storing intermediate attention states for each token. During decoding, only the new token computes attention against these cached states, avoiding recomputation between previous tokens and reducing each decode step from $O(n^2)$ to $O(n)$

With KV caching, text generation divides into two phases with distinct computational characteristics. During the prefill phase, the model processes the entire input prompt in a single forward pass, computing attention across all input tokens and populating the KV cache. This phase is compute-bound, with large matrix multiplications that exhibit high arithmetic intensity. The decode phase then generates output tokens one at a time, with each step attending to the cached KV states. Despite reduced computation per token, decode is typically memory-bound: each step must load model weights and the entire KV cache from memory while performing relatively little computation.

However, KV cache memory grows linearly with both sequence length and batch size, often becoming the primary memory bottleneck in LLM serving. At long context lengths or large batch sizes, KV cache memory can exceed the memory required for model parameters. This tension between cache capacity and throughput motivates much of the memory management work discussed later in this post.

LLM Serving

As LLM inference is projected to consume an increasing fraction of global datacenter capacity, significant research effort has focused on improving serving efficiency.

Continuous Batching and Mixed Prefill

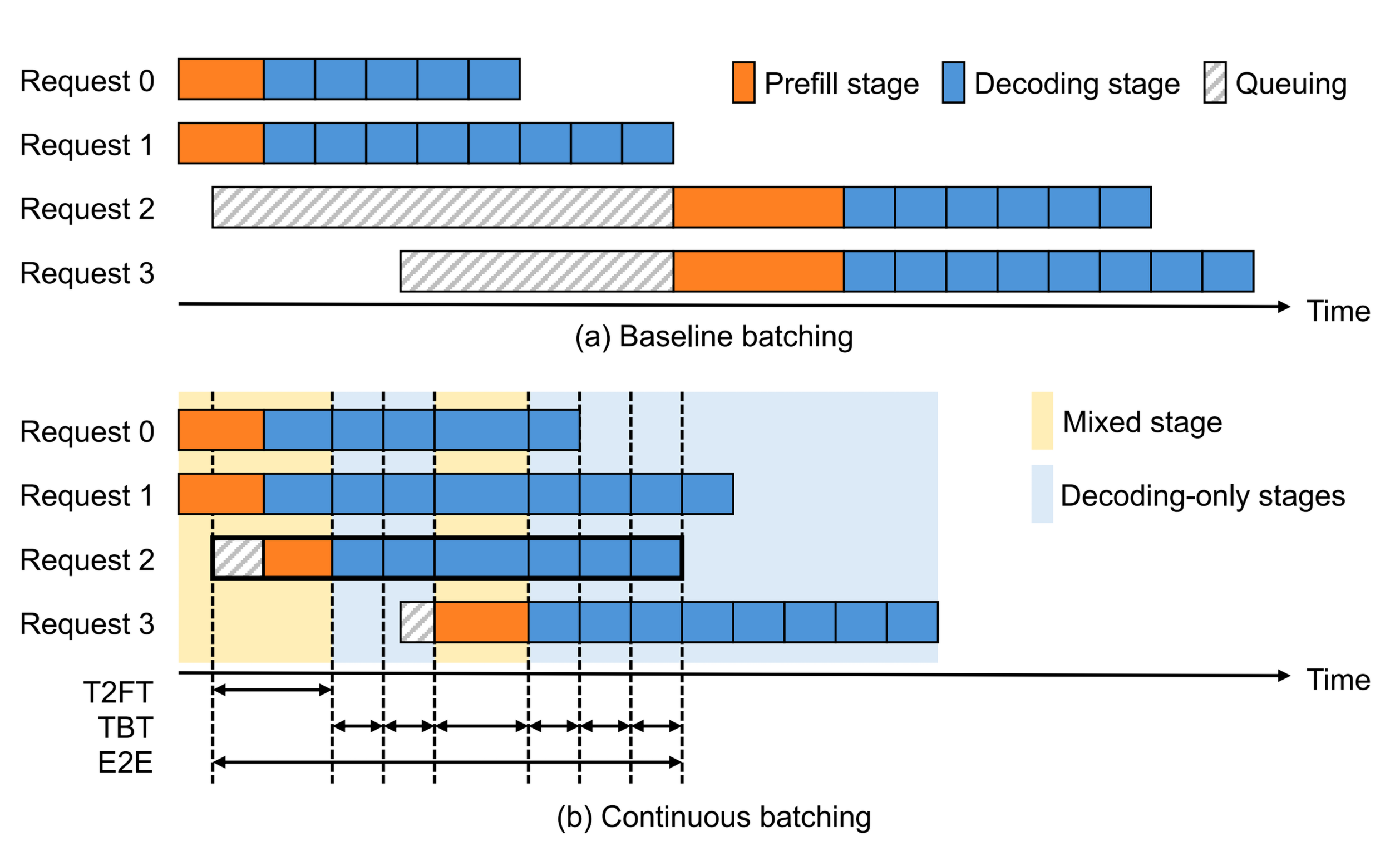

Static batching requires all requests in a batch to complete before new requests can be scheduled, leading to GPU underutilization when request lengths vary. Orca

Early continuous batching implementations processed either prefill or decode requests in each step, but not both simultaneously. Modern systems extend this by allowing prefill and decode requests to be combined within the same iteration.

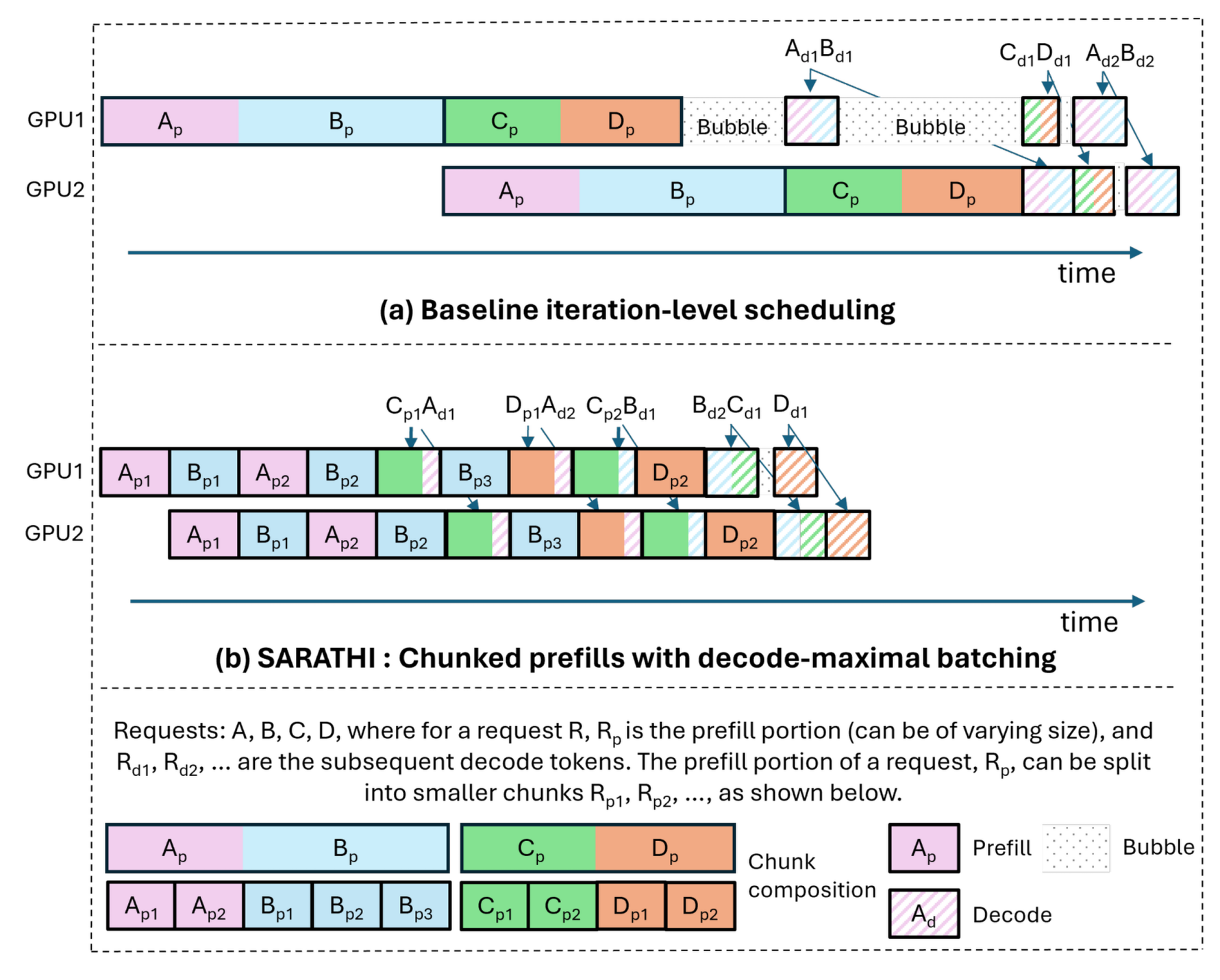

Chunked Prefill

Combining prefill and decode requests in the same batch introduces a scheduling challenge: prefill is compute-bound and processes many tokens in parallel, while decode is memory-bound and generates tokens sequentially. Without careful management, long prefills can stall ongoing decodes for several seconds, causing latency spikes. Sarathi

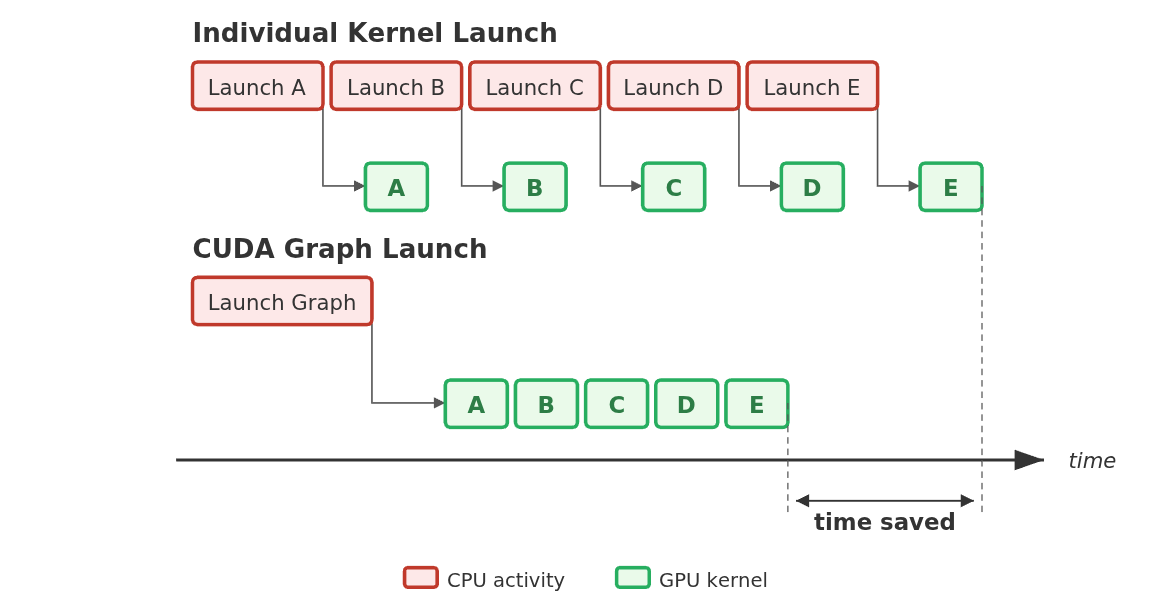

CUDA Graphs

During the decode phase, each generation step processes only a single token per request. This results in very brief GPU computations. Consequently, the time taken by the CPU to launch these kernels often exceeds the actual execution time on the GPU. This phenomenon is known as kernel launch overhead. CUDA Graphs address this bottleneck by capturing a sequence of kernel operations into a directed acyclic graph. This allows the entire sequence to be launched with a single CPU operation, drastically reducing overhead and improving overall GPU utilization.

However, CUDA Graphs impose strict execution constraints. They require static memory addresses and can only capture operations that run entirely on the GPU. If the forward pass contains unsupported operations, such as certain attention variants or CPU logic, modern serving engines employ piecewise CUDA Graphs. This technique splits the execution into capturable and uncapturable segments. This allows the system to benefit from reduced launch overhead where possible while maintaining correctness.

Support for CUDA graphs depends heavily on the underlying attention backend. Some backends support full graph capture for all computations, while others require piecewise graphs or eager execution for batches with varying sizes

Paged Attention

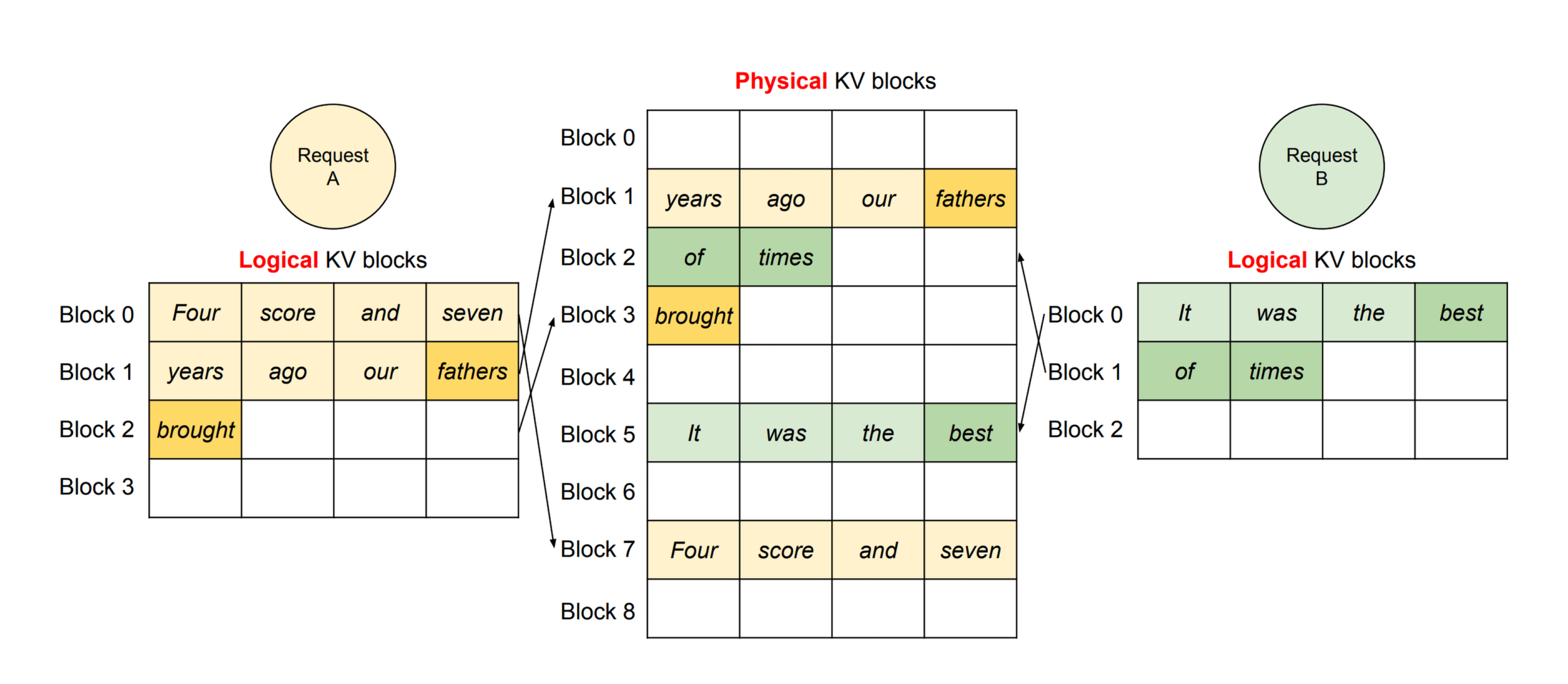

Prior to dynamic memory management, LLM serving systems allocated contiguous memory for each request’s KV cache based on the maximum supported sequence length. However, most requests generate far fewer tokens than this maximum, leading to significant GPU memory waste due to internal fragmentation — studies showed that effective memory utilization could be as low as 20%

PagedAttention addresses this by applying virtual memory concepts to KV cache management. Instead of allocating contiguous memory, it partitions the KV cache into fixed-size blocks and allocates them on demand. Analogous to how operating systems map virtual pages to physical frames, a block table maps logical blocks to physical blocks. This approach reduces memory waste to under 4% and enables flexible memory sharing across requests. For example, when multiple requests share a common prefix, their KV cache blocks can point to the same physical memory using reference counting and copy-on-write semantics. PagedAttention is the core innovation behind vLLM

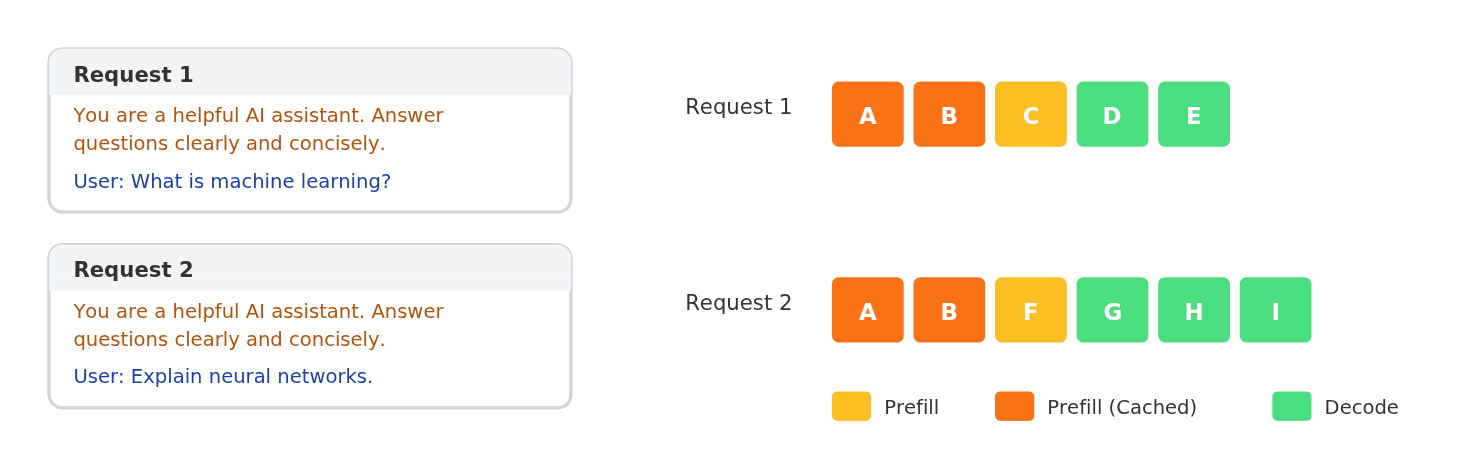

Prefix Caching

Many real-world LLM workloads exhibit identical prefixes — multi-turn conversations reuse chat history, few-shot prompting repeats the same examples, and API calls often share system prompts. Without prefix caching, the KV cache for these shared prefixes must be recomputed for each request, wasting computation and increasing latency. SGLang

Quantization

LLM inference is typically performed in 16-bit floating point formats such as FP16 or BF16, where each parameter occupies 2 bytes. Quantization reduces parameter precision to fewer bits, decreasing both memory footprint and memory bandwidth requirements. For a model with $P$ parameters, moving from 16-bit to 8-bit representation halves the memory required from $2P$ to $P$ bytes.

Multiple quantization methods exist. Weight-only quantization methods

The H100 GPU features fourth-generation Tensor Cores that support FP8 arithmetic at twice the peak throughput of FP16 or BF16

The NVIDIA GH200 Grace Hopper Superchip

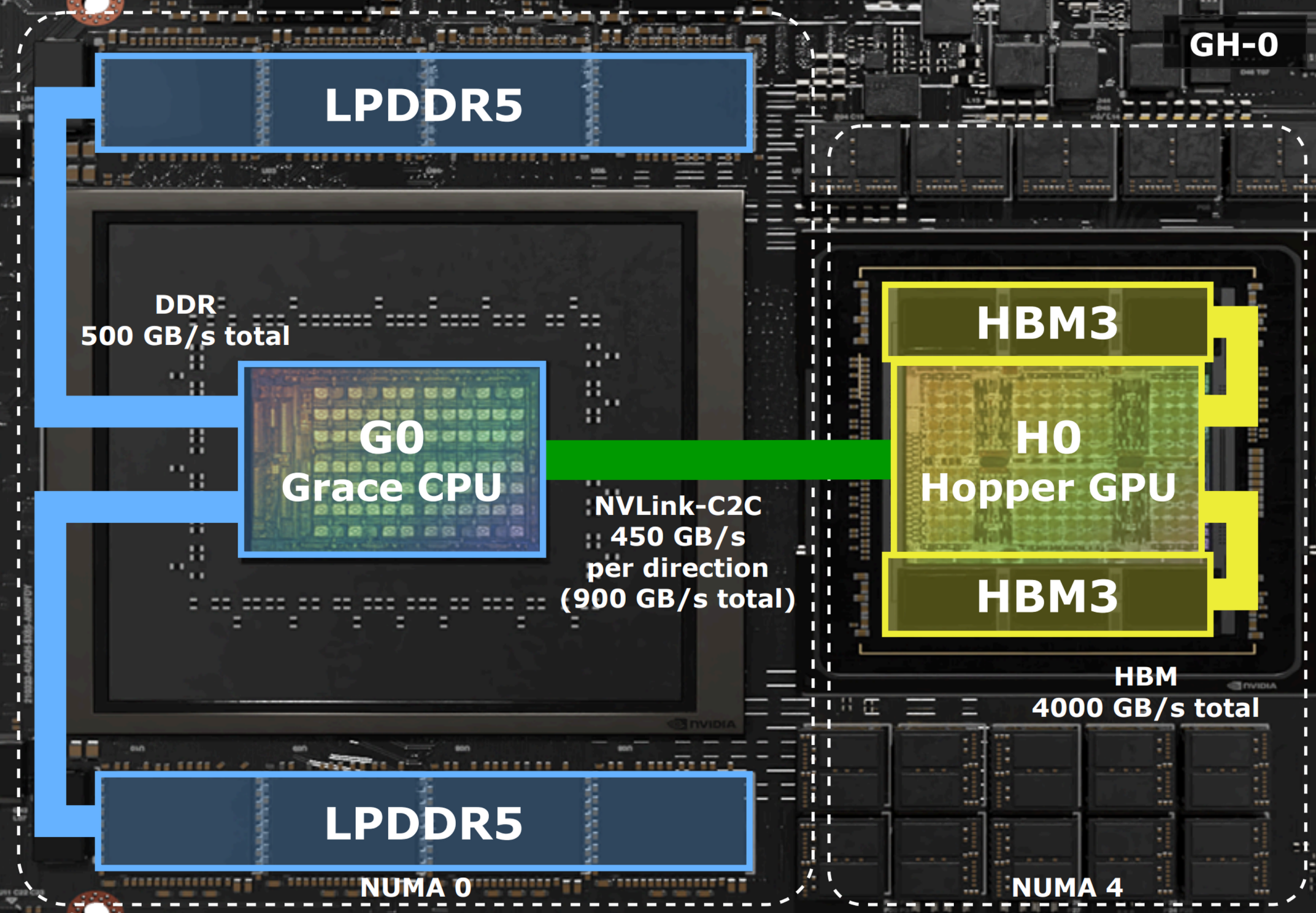

The growing demand for GPU memory by LLMs, combined with challenges in manufacturing large HBM capacities, has driven hardware vendors to explore tightly coupled heterogeneous architectures. NVIDIA’s GH200 Grace Hopper Superchip integrates a 72-core Grace CPU with an H100 GPU via NVLink-C2C, a coherent interconnect delivering 900 GB/s bidirectional bandwidth — approximately 7× that of PCIe Gen5

Superchip Architecture

The Grace CPU features 72 Arm Neoverse V2 cores with up to 480 GB of LPDDR5X memory providing approximately 500 GB/s bandwidth. The Hopper GPU provides 96 GB of HBM3 with over 4 TB/s bandwidth. NVLink-C2C enables cache-coherent access across both memory domains at 64-byte granularity, supporting direct loads, stores, and atomic operations between CPU and GPU memory without explicit copies. It provides 900 GB/s bidirectional bandwidth.

Multiple GH200 units can be connected via NVLink Switch, with up to 32 superchips forming a single cache-coherent system where all GPUs communicate at 900 GB/s bidirectional bandwidth.

Memory Architecture

The GH200 presents a hierarchical memory system with distinct bandwidth characteristics depending on the data path. The Grace CPU provides up to 480 GB of LPDDR5X memory with approximately 500 GB/s bandwidth, while the Hopper GPU provides 96 GB of HBM3 with over 4 TB/s bandwidth. This creates an asymmetric system: HBM offers roughly 8× higher bandwidth but significantly less capacity per dollar than CPU memory

The theoretical bandwidth for data movement between memory domains depends on the transfer direction and initiating processor. GPU-initiated copies between CPU and GPU memory achieve up to 450 GB/s, bounded by the C2C interconnect.

Memory Model

The GH200 exposes its memory as two NUMA nodes: LPDDR5X memory with affinity to Grace (NUMA node 0) and HBM3 memory with affinity to Hopper (NUMA node 1). Memory placement follows a first-touch policy for standard allocations, or can be explicitly controlled via numactl or libnuma.

CUDA provides several approaches for CPU-GPU data movement. Explicit copies via cudaMemcpy give the programmer full control over when data moves. Managed memory (cudaMallocManaged) provides a unified address space where the CUDA driver automatically migrates pages between CPU and GPU on demand — when a processor accesses a non-local page, a fault triggers migration. Pinned memory (cudaMallocHost) allows the GPU to directly access CPU memory without migration, though on PCIe systems this incurs high latency and limited bandwidth.

The GH200 changes the performance tradeoffs of these options. NVLink-C2C provides cache-coherent access with address translations handled by the Address Translation Service (ATS) at cache-line granularity (64 bytes). The table below summarizes the available memory types.

| Type | API | Placement | GPU Access |

|---|---|---|---|

| System | malloc, mmap | First touch | ATS |

| Device | cudaMalloc | HBM | Direct |

| Managed | cudaMallocManaged | First touch | ATS/Direct |

| Pinned | cudaMallocHost | DDR | DMA |

The Offloading Landscape

Historically, systems relying on standard PCIe buses treated offloading strictly as a capacity fallback mechanism to avoid out-of-memory errors. Due to severe bandwidth bottlenecks, developers avoided data transfers between the CPU and GPU whenever possible. The extreme bandwidth of tightly coupled Superchips fundamentally changes this paradigm. It transforms offloading from a mere fallback into an active strategy for maximizing throughput. Existing systems that leverage the NVLink-C2C connection often target the highly volatile KV cache, which creates significant bidirectional memory traffic. Additionally, systems that focus on weight offloading break compatibility with crucial serving optimizations like prefix caching and CUDA graphs.

The table below summarizes recent LLM memory optimization systems based on their offloading strategies, targeted assets, and the interconnect they were designed for and evaluated on.

| System | Strategy | Asset | Interconnect | Prefix Caching | CUDA Graphs |

|---|---|---|---|---|---|

| ZeRO-Inference | Static Offloading | Weights | PCIe | No | No |

| FlexGen | Static Offloading | Weights, KV | PCIe | No | No |

| LMCache | Dynamic Offloading | KV Cache | PCIe / Network | Yes | Yes |

| kvcached | Dynamic Allocation | KV Cache | None | No | Yes |

| xLLM | Dynamic Offloading | KV Cache | PCIe / SSD | Yes | Yes |

| eLLM | Dynamic Allocation | KV Cache, Activations | None | No | Yes |

| Pie | Dynamic Offloading | KV Cache | NVLink-C2C | No | No |

| SuperInfer | Dynamic Offloading | KV Cache | NVLink-C2C | No | Yes |

| Oneiros | Dynamic Offloading | Weights | NVLink-C2C | No | No |

| Ours | Dynamic Offloading | Weights | NVLink-C2C | Yes | Yes |

Offloading to accelerate LLM Serving

As model sizes grow and GPU HBM remains expensive and capacity-limited, offloading model weights and KV cache to CPU memory or storage has emerged as a practical approach for both running large models on resource-constrained systems and improving serving efficiency on standard hardware.

ZeRO-Inference

FlexGen

LMCache

Elastic Memory Management

Modern LLM serving systems manage KV caches through page table-based virtualization (e.g., PagedAttention), but the total memory reserved for KV cache is still statically allocated. Recent work addresses this limitation by employing CUDA virtual memory APIs to enable truly dynamic allocation.

kvcached

xLLM

eLLM

Optimizing LLM Serving on Superchips

The emergence of tightly coupled heterogeneous GPU/CPU architectures — often referred to as Superchips — such as the NVIDIA GH200, GB200, GB300, VR300, and AMD MI300A, presents new optimization opportunities for large-scale machine learning. These systems feature high-bandwidth CPU-GPU interconnects (e.g., 900 GB/s for NVLink-C2C on GH200) that fundamentally change the performance tradeoffs of offloading strategies. Existing solutions such as ZeRO-Inference were designed for slower interconnects (e.g., 64 GB/s for PCIe Gen4) and are therefore suboptimal on Superchips.

Pie

SuperInfer

Oneiros

What’s Next

This post covered the essential background for understanding LLM serving and the memory challenges that motivate offloading. In the next post, I will present our characterization of vLLM on the GH200, including bandwidth microbenchmarks, the impact of CUDA graphs, and why existing offloading mechanisms fall short. In the final post, I will present our dynamic parameter offloading system and its evaluation.